What Perplexity Does Best

Perplexity is genuinely excellent at what it is built for. Real-time web search with source-cited answers. Competitive research that pulls current data from across the internet. Technical research that synthesizes documentation, forums, and blog posts into coherent summaries. Market analysis grounded in actual sources you can verify.

If you want to understand what competitors are shipping, what the market thinks of a technology, or how a concept works at a general level — Perplexity is one of the best tools available. The discovery phase of feature planning benefits enormously from this kind of research capability.

The problem is not Perplexity. The problem is what happens next.

Where Research Stops and Planning Starts

Research and planning are sequential phases, not the same activity. Perplexity excels at research. It has four gaps that prevent it from crossing into planning.

- No codebase awareness. Perplexity searches the web, not your repository. It cannot tell you how a feature fits your existing architecture, which of your patterns it conflicts with, or what your data models already support. Every suggestion it makes is generic — useful context, not actionable implementation guidance.

- Research is not planning. Knowing what competitors have built does not tell you how to implement it in your codebase with your conventions, your component library, and your team's established patterns. The gap between competitive intelligence and an implementable plan is substantial.

- No structured output. Perplexity gives you paragraphs and citations. Not user stories. Not acceptance criteria. Not sprint items with defined scope. The output is informative prose, which still needs to be translated into the structured artifacts that engineering teams actually work from.

- No execution path. Research informs decisions. It does not create branches, implement code, open pull requests, or track what was built against what was planned. The gap between a Perplexity answer and a shipped feature is the entire engineering workflow.

How Feature1 Picks Up Where Perplexity Stops

The workflow is sequential, not competitive. Use Perplexity for competitive research and market context. Bring those insights into Feature1's F1 Assistant for codebase-aware planning that turns research into something that can actually be built.

Feature1 has built-in competitor analysis that connects to your domain — so the competitive intelligence you gather in Perplexity can be structured against your actual product context, not treated as abstract market data.

From there, the pipeline connects every stage. See how it works in full:

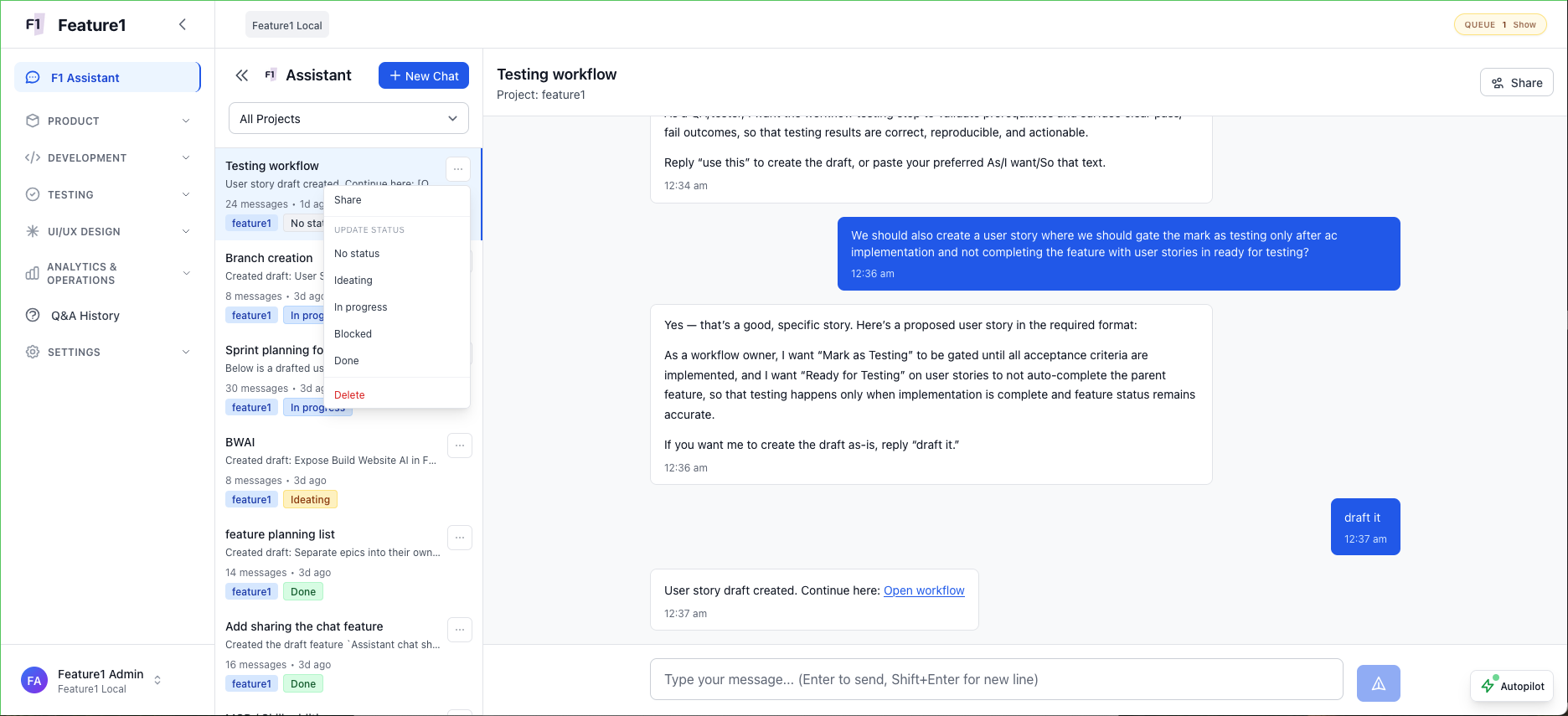

F1 Assistant: bring research insights into a shared thread, then turn them into user story drafts with one message.

- Research insights become structured feature plans inside Feature1

- Feature plans generate user stories with defined scope and acceptance criteria

- Acceptance criteria are implemented by AI in Autopilot or Copilot mode

- Pull requests are created automatically — your repo is connected, so every commit, diff, and branch is visible and traceable to the original plan

- The research-to-shipped-feature pipeline is complete and auditable

The Domain Spec gives Feature1's AI a living knowledge graph of your codebase — architecture, patterns, conventions, data models — so every plan generated from your research is grounded in what actually exists, not a generic approximation. Also read: why general-purpose AI chatbots fall short for feature planning.

What Each Tool Actually Gives You

Here is a direct comparison across the capabilities that matter when you are trying to move from an idea to a shipped feature.

- Best-in-class web research

- Source-cited answers

- Competitive intelligence

- Stops at information

- Codebase-aware planning

- Domain Spec context

- Built-in competitor analysis

- Information → Plan → Code → PR (repo connected, full change visibility)

Explore the full platform feature set or see how it works to understand the complete workflow.

Use Both

Perplexity answers the "what should we build?" question. Feature1 answers the "how do we build it and ship it?" question. These are sequential questions, not competing ones.

The mistake is treating research as a substitute for planning. Gathering competitive intelligence is a discovery activity. Translating that intelligence into a codebase-aware plan, structured user stories, testable acceptance criteria, and implemented code is a different activity entirely — one that requires tools built for it.

Use Perplexity at the start of the workflow. Use Feature1 for everything after. Research is the input. Shipped features are the output.